What Defensible Screening Actually Means

Defensible screening in rental housing means a tenant screening decision can be traced to a documented rule, explained after the fact, attributed to the person or system that made it, challenged through a defined dispute process, and supported by records that existed before the dispute began.

That is the standard.

But most of the industry runs on a version of screening that doesn’t hold up when someone asks why.

Not because the intent is bad. Because the process was built for speed, not for scrutiny. And in this space, scrutiny is inevitable.

I’ve worked this problem from three angles: building screening products at a credit bureau, analyzing identity risk at the application layer in fraud detection, and operating inside a Consumer Reporting Agency accountable for the accuracy of the report itself.

Across all three, the pattern is consistent.

The question isn’t whether a decision was made. It’s whether you can still explain it six months later, with the original applicant standing next to an attorney.

At its core, defensible screening is not about the outcome. It’s about whether the decision can be reconstructed and justified after the fact.

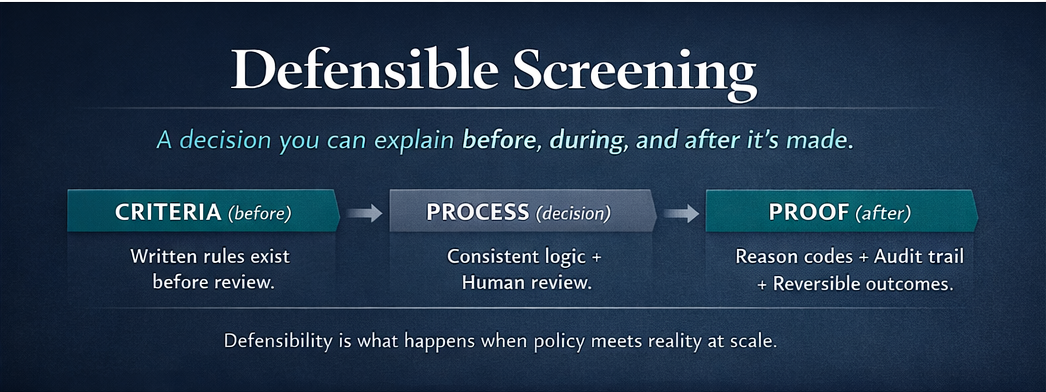

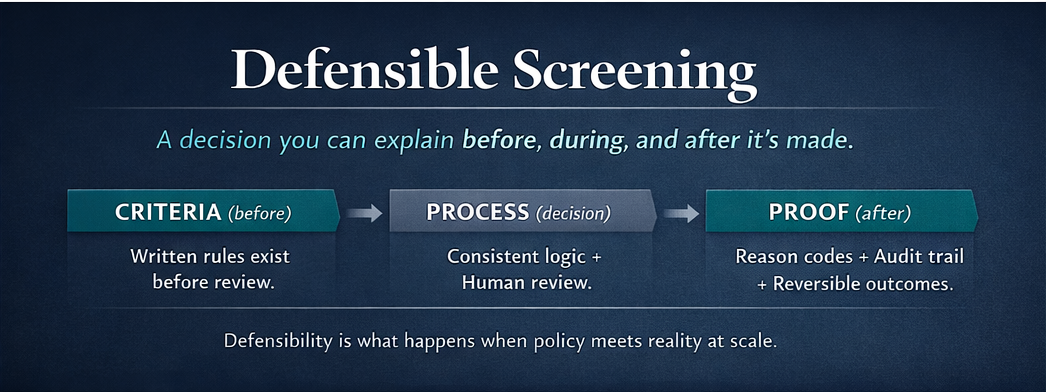

A defensible screening system produces decisions that can be explained before, during, and after they’re made.

What makes defensible screening possible

A defensible process doesn’t exist in isolation.

It depends on the structure of the system behind it.

In rental housing, that structure is often defined by whether the screening provider operates as a Consumer Reporting Agency under the Fair Credit Reporting Act (FCRA).

That classification determines whether the process includes:

- accuracy requirements

- dispute rights

- defined responsibilities for decision-making

Without those elements, a process may appear consistent—but it lacks the mechanisms required to support and correct decisions.

Why "we used the software" isn't an answer

I want to be specific about what non-defensible looks like, because it hides in plain sight.

An operator uses a third-party screening score to deny an applicant. The score comes back below threshold. The denial goes out. No documentation of which rule triggered the threshold. No record of what data the score weighted. No indication that the applicant's individual circumstances were considered.

The adverse action notice:

- doesn't name the Consumer Reporting Agency that furnished the report

- doesn't preserve the applicant's right to dispute the information

- doesn't clarify that the CRA didn't make the decision — the operator did

That's not an edge case. That's a normal Tuesday for a lot of property management teams.

Now picture a fair housing complaint. Or an FCRA dispute. Or a regulator audit. The question isn't "did you screen this person?" It's "can you explain how your process applied your criteria to this specific person's specific information?" If the answer is "the vendor scored them low," that's not an explanation. It's a handoff of accountability to a company that will point right back at you.

Louis v. SafeRent Solutions settled in November 2024 for $2.275 million. The core problem wasn't malice. It was opacity. SafeRent's algorithm issued scores with no disclosed weighting, no adjustment for housing vouchers (the public housing authority covered 73% of the named plaintiffs' monthly rent directly), and no ability for landlords to understand what drove the output. The court found the Fair Housing Act applied to the vendor, not just the operator who used the tool. The settlement required that for voucher holders, SafeRent can no longer issue approve or decline recommendations unless the model has been independently validated for fairness.

Read that last sentence again. An algorithmic recommendation isn't a decision. Without explainability, it's liability transfer.

HUD's 2016 guidance on criminal history and disparate impact put the industry on notice that blanket screening policies carried Fair Housing risk. That guidance was rescinded in 2025. The underlying disparate impact exposure under the Fair Housing Act didn't go away with it. Louis v. SafeRent settled the same year under exactly that theory. The CFPB has made clear in enforcement actions that sloppy public-record handling and weak matching logic don't meet the FCRA's accuracy standard under Section 607. The regulatory signal has been consistent for a decade. What's changed is enforcement follow-through.

I write about these cases regularly in Screening For The Now. The pattern is consistent: the process that failed wasn't secret or unusual. It was standard practice that nobody had tested against a hard question.

Five traits. The ones that actually matter.

A defensible process isn't complicated. It's just disciplined.

Written criteria. The standard exists before the application arrives. This sounds obvious. It isn't. I've seen operators with screening "policies" that exist only in the institutional memory of whoever handles applications that week. When that person leaves, the policy leaves with them. That's not policy. That's tribal knowledge.

Consistent logic. If the same facts appear, the same rule fires. You don't have to automate everything. But the rules can't change depending on who's reviewing the file that day. Variance without documentation is discrimination risk you can't see until someone maps your outcomes.

Structured routing for ambiguity. Good systems don't automate uncertainty. They route it. Gray areas, incomplete records, individualized circumstances that require human judgment: these need a documented path, not an ad hoc one. The FCRA's "reasonable procedures" standard under Section 607 requires exactly this. Reasonable isn't a vibe. It's a process.

Reason codes at the time of the decision. Not a summary written after a complaint comes in. At the time. If your process can't attach a reason to a suppression, an escalation, a denial, or a documentation request as it happens, you're producing narrative, not records.

A real dispute path. Section 611 of the FCRA requires Consumer Reporting Agencies to reinvestigate disputed information, generally within 30 days. It exists because screening data is fallible. Records are incomplete. Matching errors happen. The question isn't whether errors occur. It's whether your process assumes the first outcome is always the final outcome. If it does, it's brittle. Brittle doesn't survive scrutiny. It creates it.

A defensible process isn’t defined by the outcome. It’s defined by whether the system can support the outcome when that outcome is questioned.

Where things actually break

Here's the part most compliance conversations miss.

The failure usually isn't the policy. It's the gap between the written policy and what the workflow actually does. Three places this shows up consistently.

The rule was never written down. The team operates on what everyone just knows. The policy document says one thing; the actual practice does another. And the records, if they exist at all, reflect the practice, not the policy.

The reviewer overrode the rule without documentation. Manual review isn't wrong. It's often exactly right. But manual override with no record of why is an exposure point, not a feature. "We used judgment" is a starting point for discovery. Not a defense.

Adverse action, disputes, and follow-ups were treated as admin tasks. They're part of the decision architecture. The FCRA doesn't separate them. If adverse action notices are going out without naming the CRA that furnished the report, if dispute documentation is incomplete, if follow-up timelines are loose, that's where the compliance exposure lives. Not in the initial screening logic.

What this adds up to

Defensible screening isn't:

- stricter criteria

- more denials

- handing the decision to a vendor and calling it a process

It's a workflow where criteria exist before they're applied, logic behaves consistently, ambiguous cases surface instead of disappear, and the outcome can be explained to someone who wasn't in the room. Maybe months later. Maybe in writing. Maybe both.

A defensible process isn’t defined by the outcome.

It’s defined by whether the system can support the outcome.

The operators who have this figured out didn't get there by buying a better tool. They got there by deciding their process would hold up before anyone asked. Compliance built into the workflow is cheap. Compliance retrofitted after a complaint is expensive.

The process either holds up or it doesn't. The time to find out isn't when someone asks.